Artifical Intelligence vs. Academic Integrity

May 8, 2023

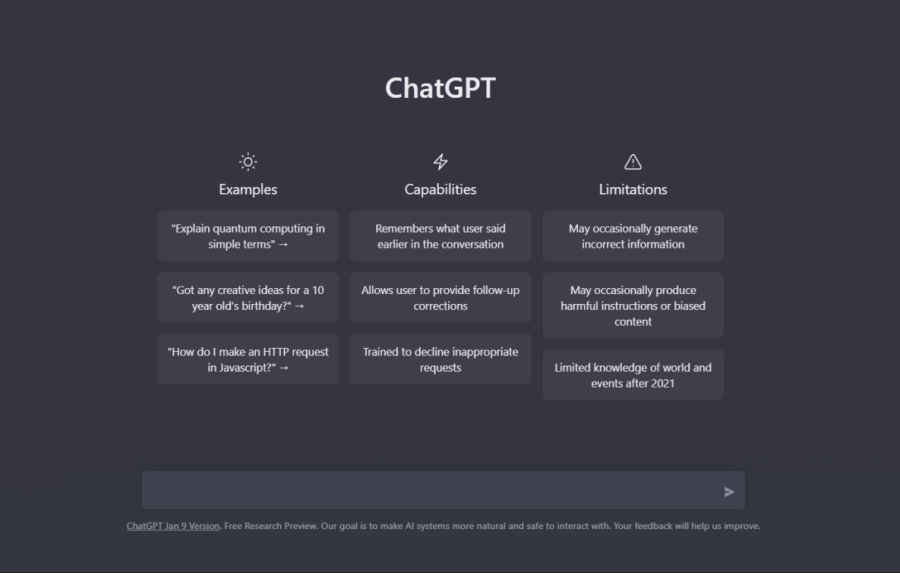

The new phenomenon of AI chatbots has to be concerning for students and teachers alike who care about academic integrity. The main concerns center around OpenAI’s ChatGPT, which was released in November and has become a popular source for students to use on assignments. According to a survey from Study.com of 1,000 students aged 18 and older, a whopping 89 percent of students admitted to using the bot for help on a homework assignment, 48 percent admitted to using it for an at-home test, and 53 percent admitted to using it for an essay. Using ChatGPT for a little help on a homework assignment could be understandable, but sitting back and letting it write an entire essay? That’s just unacceptable.

ChatGPT allows students to simply copy and paste sentences mindlessly and try to get credit for school that way. They don’t actually take in any information or learn from that approach. The nonsense that ChatGPT spits out doesn’t help anyone. As a test, I asked it to solve a physics problem, and after asking it the same exact question three times, it had given me three different answers. ChatGPT has been updated and improved, but it is still deeply flawed. A major argument for chatbot technology is that students can learn from it, but when it can’t even do physics properly, what good is it to kids?

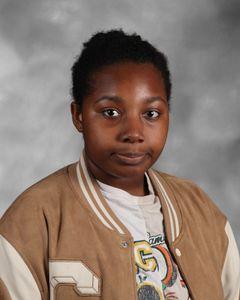

ChatGPT may not be able to do math or science particularly well, but it can do just enough to write passable essays. That, to me, is the most dangerous part of the technology. I was working with a friend on a group project a couple of weeks ago and I noticed that his sentences seemed almost too sophisticated and detailed for his level of writing. And sure enough, when I peeked over his shoulder I saw him feeding information into ChatGPT so that it would do his assignment for him. I was somewhat annoyed by it but thought the teacher would eventually catch on and dock him a few points, but we both ended up getting the same exact grade. I probably did five times as much work, but apparently, that didn’t matter. It’s a little disheartening to see that effort level really doesn’t matter in school, as long as you can scrape by cheating undetected. And it’s not just earning a false grade that is concerning. I’m sure everyone has had a class that either didn’t have a teacher or had a teacher that hadn’t taught anything well, and that’s very frustrating. Students don’t learn anything and the class is essentially a waste of time. That’s the same experience that students will get if they use AI to push their way through a course. By using the technology available to them, they don’t actually gain anything, even if they get a hallow grade and don’t get punished directly for their laziness.

If you give a student an inch, they’re going to take a mile. Most times kids use ChatGPT or another AI bot, it won’t just be a one-off occurrence. A survey from Intelligent, an online magazine, found that 60% of college students who use ChatGPT use it on over half of their assignments. ChatGPT may be ok to help kids through certain difficult problems and learn some concepts, but it is a tool that is ripe for exploitation. Kids just can’t be trusted to use it fairly and responsibly.

So how do we stop this growing phenomenon? For one, teachers can start matching the effort levels of students attempting to cheat with counter-cheating measures. These can range from plagiarism and AI detector tools to simply inspecting work more closely to make sure it actually sounds like the student’s writing. As students try harder and harder to game the system, teachers must work twice as hard to protect that system. After all, what’s the point of having grades if they don’t actually reflect a student’s effort and academic prowess?